In this post, I describe the final part of the process: namely deploying the trained AI model into "production".

Google Cloud ML Engine

As the title of the post suggests, I opted for the Google Cloud ML Engine for the production infrastructure for the simple reason that I wanted a serverless solution such that I would only be paying on-demand for the required computing resources as I needed them, rather than having to pay for continuously-operating virtual machine(s) (or Docker container(s)) whether I was utilising them or not.From what I could ascertain at the time I was deciding, Google Cloud ML Engine was the only available solution which provides such on-demand scaling (importantly, effectively reducing my assigned resources -- and costs -- to zero when not in use by me). Since then, AWS SageMaker has come on the scene, but I could not determine from the associated documentation whether the computing resources are similarly auto-scaled (from as low as zero). If anyone knows the answer to this, please advise via the Comments section below.

GOTCHA: one of the important limitations of the Google Cloud ML Engine for online prediction is that it auto-allocates single core CPU-based nodes (virtual machines), rather than GPUs. This means that the prediction is slow -- especially on the (relatively complex) TensorFlow object detector model which I'm using (multiple minutes per prediction!). I suppose this may be the price one has to pay for the on-demand flexibility, but since Google obviously has GPUs and TPUs at their disposal, it would be a welcome improvement if they were to offer such on their Cloud ML Engine. Maybe that will come...

Deploying the TensorFlow Model into Google Cloud ML

Exporting the Trained Model from TensorFlow

The first step is to export the trained model in the appropriate format. As in the previous post, and picking up where I left off, the export_inference_graph.py Python method included with the Google Object Detection API does this, and can be called from the Ubuntu console as follows:python object_detection/export_inference_graph.py

--input_type encoded_image_string_tensor

--pipeline_config_path=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/faster_rcnn.config

--trained_checkpoint_prefix=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/train/model.ckpt-46066

--output_directory /risklogical/DeeplearningImages/Outputs/PR_Detector_JustP_RCNN_ForDeploy

where the paths and filenames are obviously substituted with your own. GOTCHA: in the above code snippet, it is important to specify

--input_type encoded_image_string_tensor

rather than what I used previously, namely

--input_type image_tensor

since by specifying encoded_image_string_tensor this enables the image data to be presented to the model via encoded JSON via a RESTful web-service (in production) rather than simply via Python code (which I used in the previous post for post-training ad hoc testing of the model).

DOUBLE GOTCHA: ...and this is perhaps the worst of all the gotchas from the entire project. Namely, the Google object detection TensorFlow models, when exported via the Google API export_inference_graph.py command as presented above, are NOT COMPATIBLE with the Google Cloud ML Engine if the command IS NOT RUN VIA TensorFlow VERSION 1.2. If you happen to use a later version of TensorFlow such as TF 1.3 (as I first did, since that was what I had installed on my Ubuntu development machine for training the model) THE MODEL WILL FAIL on the Google Cloud ML Engine. The workaround is to create a Virtual Environment, install TensorFlow Version 1.2 into that Virtual Environment, and run the export_inference_graph.py command as presented above, from within the Virtual Environment. Perhaps the latest version of TensorFlow has eliminated this annoying incompatibility, but I'm not sure. If it has indeed not yet been resolved (does anyone know?), then c'mon Google!

Deploying the Exported Model to Google Cloud ML

Creating a Google Compute Cloud Account

In order to complete the next few steps, I had to create an account on Google Compute Cloud. That is all well-documented and the procedure will not be repeated here. The process was straightforward.Installing the Google Cloud SDK

This is required in order to interact with the Google Compute Cloud from my Ubuntu model-building/training machine e.g., for copying the exported model across. The SDK and installation instructions can be found here. The process was straightforward.

Copying the Exported Model to Google Cloud Storage Platform

I copied the exported model described earlier up to the cloud by issuing the following command from the Ubuntu console:

gsutil cp -r /risklogical/DeeplearningImages/Outputs/PR_Detector_JustP_RCNN_ForDeploy/saved_model/ gs://parkingradar/trained_models/

where the gsutil application is from the Google Cloud SDK. The parameter containing the path to the saved model uses the same path specified when calling the export_inference_graph.py method above (and obviously should be substituted with yours), and the destination on Google Cloud Storage ("gs://...") is where my models are (temporarily) stored in a staging area on the cloud (and obviously should be substituted with yours).

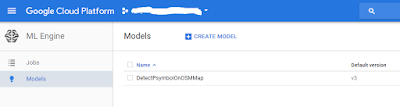

Creating the Model on Google Cloud ML

I then had to create what Google Cloud ML refers to as a 'model' -- but which is really just a container for actual models which are then distinguished by version number -- by issuing the following command from the Ubuntu console:

gcloud ml-engine models create DetectPsymbolOnOSMMap --regions us-central1/

where the gcloud application is from the Google Cloud SDK. The name DetectPsymbolOnOSMMap is the (arbitrary) name I gave to my 'model', and the --regions parameter allows me to specify the location of the infrastructure on the Google Compute Cloud (I selected us-central1).

The next step is the key one for creating the actual runtime model on the Google Cloud ML. I did this by issuing the following command from the Ubuntu console:

gcloud ml-engine versions create v3 --model DetectPsymbolOnOSMMap --origin=gs://parkingradar/trained_models/saved_model --runtime-version=1.2

What this command does is create a new runtime version under the model tag name DetectPsymbolOnOSMMap (version v3 in this example -- as I had already created v1, and v2 from earlier prototypes) of the exported TensorFow model held in the temporary cloud staging area (gs://parkingradar/trained_models/saved_model ). GOTCHA: it is essential to specify the parameter --runtime-version=1.2 (for the TensorFlow version) since Google Cloud ML does not support later versions of TensorFlow (see earlier DOUBLE GOTCHA).

At this point I found it helpful to login to the Google Compute Cloud portal (using my Google Compute Cloud access credentials) where I can view my deployed models. Here's what the portal looks like for the model version just deployed:

Running the Exported Model on Google Cloud ML via a C# wrapper

Why C# ?

Since the ParkingRadar back-end stack is written in C#, I opted for C# for developing the wrapper code for calling the model on Google Cloud ML. Although Python was the most suitable choice for training and preparing the Deep Learning model for deployment, in my case C# was the natural choice for this next phase.

This reference provides comprehensive example code necessary to get it all working -- mostly. I say mostly, because in that reference they gloss over the issues surrounding authentication via OAUTH2. It turns out that the aspects surrounding authentication were the most awkward to resolve, so I'll provide some details on how to get this working.

Source-code snippet

Here is the C# code-listing containing the essential elements for wrapping the calls to the deployed model on Google Cloud ML (for the specific deployed model and version described above). The code contains all the key components including (i) a convenient class for formatting the image to be sent, (ii) the code required for authentication via OAUTH2; (iii) the code to make the actual call via RESTful web-service to the appropriate end-point for the model running on the Google Cloud ML; (iv) code for interpreting the results returned from the prediction including the parsing of the bounding boxes, and filtering results against a specified threshold score. The results are packaged into XML, but this is entirely optional and can instead be packaged into whatever format you wish. Hopefully the code is self-explanatory. GOTCHA: for reasons unknown to me at least, specification of model version caused a JSON parsing failure. The workaround was to leave the version parameter blank in the method call. This forces Google Cloud ML to use the assigned default version for the given model. This default assignment can be easily adjusted via the Google Compute Cloud portal introduced earlier.

using System;

using System.Collections.Generic;

using System.Net.Http;

using System.Net.Http.Headers;

using System.Text;

using System.Threading.Tasks;

using Google.Apis.Auth.OAuth2;

using Newtonsoft.Json;

using System.IO;

using System.Xml;

using System.Collections.Generic;

using System.Net.Http;

using System.Net.Http.Headers;

using System.Text;

using System.Threading.Tasks;

using Google.Apis.Auth.OAuth2;

using Newtonsoft.Json;

using System.IO;

using System.Xml;

{

class Image

{

public String imageBase64String { get; set; }

public String imageAsJsonForTF;// { get; set; }

//Constructor

public Image(string imageBase64String)

{

this.imageBase64String = imageBase64String;

this.imageAsJsonForTF = "{\"instances\": [{\"b64\":\"" + this.imageBase64String + "\"}]}";

}

}

class Prediction

{

//For object detection

public List<Double> detection_classes { get; set; }

public List<Double> detection_boxes { get; set; }

public List<Double> detection_scores { get; set; }

public override string ToString()

{

return JsonConvert.SerializeObject(this);

}

}

class PredictClient

{

private HttpClient client;

public PredictClient()

{

this.client = new HttpClient();

client.BaseAddress = new Uri("https://ml.googleapis.com/v1/");

client.DefaultRequestHeaders.Accept.Clear();

client.DefaultRequestHeaders.Accept.Add(new MediaTypeWithQualityHeaderValue("application/json"));

//Set infinite timeout for long ML runs (default 100 sec)

client.Timeout = System.Threading.Timeout.InfiniteTimeSpan;

}

public async Task<string> Predict<I, O>(String project, String model, string instances, String version = null)

{

var version_suffix = version == null ? "" : $"/version/{version}";

var model_uri = $"projects/{project}/models/{model{version_suffix}";

var predict_uri = $"{model_uri}:predict";

//See https://developers.google.com/identity/protocols/OAuth2

//Service Accounts which is what should be used here rather than

//DefaultCredentials...

// Get active credential from credentials json file distributed with

// app

// NOTE: need to use App_data folder since cannot put files in bin

// on Azure web-service...

string credPath = System.Web.Hosting.HostingEnvironment.MapPath(@"~/App_Data/**********-********.json");

var json = File.ReadAllText(credPath);

Newtonsoft.Json.Linq.JObject cr = (Newtonsoft.Json.Linq.JObject)JsonConvert.DeserializeObject(json);

string s = (string)cr.GetValue("private_key");

// Create an explicit ServiceAccountCredential

// credential

ServiceAccountCredential credential = null;

credential = new ServiceAccountCredential(

new ServiceAccountCredential.Initializer((string)cr.GetValue("client_email"))//("client_email"))

{

Scopes = new[] { "https://www.googleapis.com/auth/cloud-platform" }

}.FromPrivateKey((string)cr.GetValue("private_key")));//.FromCertificate(certificate));

var bearer_token = await credential.GetAccessTokenForRequestAsync().ConfigureAwait(false);

client.DefaultRequestHeaders.Authorization = new AuthenticationHeaderValue("Bearer", bearer_token);

var request = instances;

var content = new StringContent(instances, Encoding.UTF8, "application/json");

var responseMessage = await client.PostAsync(predict_uri, content);

responseMessage.EnsureSuccessStatusCode();

var responseBody = await responseMessage.Content.ReadAsStringAsync();

return responseBody;

}

}

class PredictionCaller

{

static PredictClient client = new PredictClient();

private String project = "************";

private String model = "DetectPsymbolOnOSMMap";

private String version = "v3";

//Only show results with score >=this

private double thresholdSuccessPercent = 0.95;

private String imageBase64String;

public string resultXmlStr = null;

//Constructor

public PredictionCaller(string project, string model, double thresholdSuccessPercent, string imageBase64String)

{

this.project = project;

this.model = model;

//this.version = version;//OMIT and force use of DEFAULT version

this.thresholdSuccessPercent = thresholdSuccessPercent;

this.imageBase64String = imageBase64String;

RunAsync().Wait();

}

public async Task RunAsync()

{

string XMLstr = null;

string errStr = null;

try

{

Image image = new Image(this.imageBase64String);

var instances = image.imageAsJsonForTF;

string responseJSON = await client.Predict<String, Prediction>(this.project, this.model, instances).ConfigureAwait(false); //version blank to force use of default version for model

//since version mechanism not working via json ???

dynamic response = JsonConvert.DeserializeObject(responseJSON);

int numberOfDetections = Convert.ToInt32(response.predictions[0].num_detections);

//Create XML of detection results

XMLstr = "<PredictionResults Project=\"" + project + "\" Model =\"" + model + "\" Version =\"" + version + "\" SuccessThreshold =\"" + thresholdSuccessPercent.ToString() + "\">";

try

{

for (int i = 0; i < numberOfDetections; i++)

{

double score = (double)response.predictions[0].detection_scores[i];

double[] box = new double[4];

for (int j = 0; j < 4; j++)

{

box[j] = (double)response.predictions[0].detection_boxes[i][j];

}

//See //https://www.tensorflow.org/versions/r0.12/api_docs/python/image/working_with_bounding_boxes

double box_ymin = (double)box[0];

double box_xmin = (double)box[1];

double box_ymax = (double)box[2];

double box_xmax = (double)box[3];

//Just include if score better than threshold%

if (score >= thresholdSuccessPercent)

{

try

{

XMLstr += "<Prediction Score=\"" + score.ToString() + "\" Xmin =\"" + box_xmin.ToString() + "\" Xmax =\"" + box_xmax.ToString() + "\" Ymin =\"" + box_ymin.ToString() + "\" Ymax =\"" + box_ymax.ToString() + "\"/>";

}

catch (Exception E)

{

errStr += "<Error><![CDATA[" + E.Message + "]]></Error>";

}

}

}

}

catch (Exception E)

{

errStr += "<Error><![CDATA[" + E.Message + "]]></Error>";

}

finally

{

if (!string.IsNullOrWhiteSpace(errStr))

{

XMLstr += errStr;

}

XMLstr += "</PredictionResults>";

}

//safety test that XML good

XmlDocument xmlDoc = new XmlDocument();

xmlDoc.LoadXml(XMLstr);

}

catch (Exception e)

{

XMLstr = "<Error>CLOUD_ML_ENGINE_FAILURE</Error>";

}

this.resultXmlStr=XMLstr;

}

}

For the ParkingRadar application, I actually built the above code into a RESTful web-service hosted on Microsoft Azure cloud where some of the ParkingRadar back-end code-stack resides. The corresponding WebApi controller code looks like this:

using System;

using System.Collections.Generic;

using System.Linq;

using System.Net;

using System.Net.Http;

using System.Web.Http;

namespace FlyRestful.Controllers

{

public class Parameters

{

public string project { get; set; }

public string model { get; set; }

public string thresholdSuccessPercent { get; set; }

public string imageBase64String { get; set; }

}

public class GoogleMLController : ApiController

{

[Route("***/********")] //route omitted from BLOG post

[HttpPost]

public string PerformPrediction([FromBody] Parameters args)

{

string result = null;

try

{

string model = args.model;

string project = args.project;

string thresholdSuccessPercent = args.thresholdSuccessPercent;

string imageBase64String = args.imageBase64String;

prediction_client.PredictionCaller pc = new prediction_client.PredictionCaller(project, model, double.Parse(thresholdSuccessPercent), imageBase64String);

result = pc.resultXmlStr;

}

catch (Exception E)

{

result = E.Message;

}

return result;

}

}

}

...and below is an example client-side caller to this RESTful web-service (snippet taken from a c# Windows console app). This sample includes (i) code for converting a test '.png' image file into the appropriate format for encoding via JSON for consumption by the aforementioned web-service (and passing on to the TensorFlow model); (ii) calling the predictor and retrieving the prediction results; (iii) converting the returned bounding boxes into latitude, longitude offsets (representing the centre-point location of given bounding-box since that is what ParkingRadar actually only cares about!)

static async Task RunViaWebService()

{

try {

HttpClient client = new HttpClient();

client.BaseAddress = new Uri("https://*******/***/"); //hidden on BLOG

client.DefaultRequestHeaders.Accept.Clear();

client.DefaultRequestHeaders.Accept.Add(new MediaTypeWithQualityHeaderValue("application/json"));

//Set infinite timeout for long ML runs (default 100 sec)

client.Timeout = System.Threading.Timeout.InfiniteTimeSpan;

var predict_uri = "*******"; //hidden on BLOG

Dictionary<string, string> parameters = new Dictionary<string, string>();

parameters.Add("project", project);

parameters.Add("model", model);

parameters.Add("thresholdSuccessPercent", thresholdSuccessPercent.ToString());

//Load a sample PNG

string fullFile = @"ExampleRuntimeImages\FromScreenshot.png";

//Load image file into bytes and then into BAse64 string format for transport via JSON

parameters.Add("imageBase64String", System.Convert.ToBase64String(System.IO.File.ReadAllBytes(fullFile)));

var jsonString = JsonConvert.SerializeObject(parameters);

var content = new StringContent(jsonString, Encoding.UTF8, "application/json");

var responseMessage = await client.PostAsync(predict_uri, content);

responseMessage.EnsureSuccessStatusCode();

var resultStr = await responseMessage.Content.ReadAsStringAsync();

Console.WriteLine(resultStr);

//Now create lat-lon of centre point for each boumding box

//See http://doc.arcgis.com/en/data-appliance/6.3/reference/common-attributes.htm

//Since this data set created from ZOOM 17 on standrad web mercator

//Mapscale=1:4514 , 1 pixel=0.00001 decimal degrees (1.194329 m a t equator)

// See http://wiki.openstreetmap.org/wiki/Zoom_levels 0.003/256 = 1.1719e-5

double pixelsToDegrees = 0.000011719;

//Strip out string delimiters

resultStr = resultStr.Remove(0, 1);

resultStr = resultStr.Remove(resultStr.Length - 1, 1);

resultStr = resultStr.Replace("\\", "");

XmlDocument tempDoc = new XmlDocument();

tempDoc.LoadXml(resultStr);

XmlNodeList resNodes=tempDoc.SelectNodes("//Prediction");

if (resNodes != null)

{

foreach (XmlNode res in resNodes)

{

double Xmin = double.Parse(res.SelectSingleNode("@Xmin").InnerText);

double Xmax = double.Parse(res.SelectSingleNode("@Xmax").InnerText);

double Ymin = double.Parse(res.SelectSingleNode("@Ymin").InnerText);

double Ymax = double.Parse(res.SelectSingleNode("@Ymax").InnerText);

double lat = testLat + pixelsToDegrees * (0.5 - 0.5 * (Ymin + Ymax)) * imageHeightPx;

double lon = testLon + pixelsToDegrees * (0.5 * (Xmin + Xmax) - 0.5) * imageHeightPx;

Console.WriteLine("LAT " + lat.ToString() + ", LON " + lon.ToString());

}

}

}

catch (Exception E)

{

Console.WriteLine(E.Message);

}

}

With the RESTFul web-service (and suitable client-code) deployed on Azure, the entire project is complete. The goals have been met. The "P" symbol object detector is now live "in production" within the ParkingRadar back-end code-stack, and has been running successfully for some weeks now.

Closing Comments

If you have read this post (and especially the previous post) in it's entirety, I expect you will agree that the process for implementing a Deep Learning object-detection model in TensorFlow can reasonably be described as tedious. Moreover, if you have actually implemented a similar model in a similar way, you will know just how tedious it can be. I hope the code snippets provided here may be helpful if you happen to get stuck along the way.All that said, it is nevertheless quite remarkable, to me at least, that I was able to create a Deep Learning object detector and deploy it in "production" to the (serverless) cloud, all with open-source software, albeit with some bumps in the road. Google should be congratulated on making all that possible.