In this and a follow-on post, I provide a "beans-to-cup" overview of the entire process. I make reference to various online resources which were extremely helpful, and which present many of the details in a clear manner, so there is need for me to repeat here. I do however point out various "gotchas" which frustrated my progress and for which I present the corresponding workarounds that worked for me, with the aim of hopefully saving you some trouble if you face such in your own AI endeavours.

I make no apologies for any technical decisions which may be considered sub-optimal by those who know better. As I said, this was an AI learning exercise for me, first and foremost.

The Goal

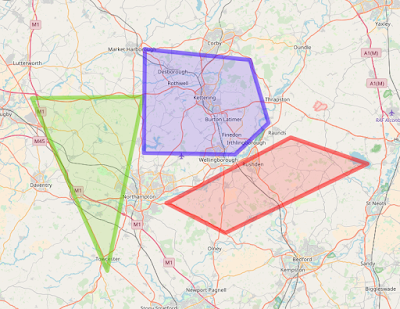

For a given geographical (latitude, longitude) location on ParkingRadar's map, determine how far away it is from a "P" symbol on the map. The screenshot below shows examples of such "P" symbols. Each represents an officially recognised parking spot or lot.

The Solution Plan

The high-level plan to reach the specified goal comprised the following steps:- Prepare a suite of screenshot images specifically selected to contain such "P" symbols in known relative positions (e.g., with respect to the center of the given screenshot)

- Use the test images to train an AI Deep Learning object detection algorithm to recognise the "P" symbols and determine their relative positions (from which the physical coordinates in terms of latitude and longitude can be obtained)

- Deploy the trained AI in a suitable "production" framework for automated use by ParkingRadar

The Toolkit

The first decision to be made was what AI framework to use, and thereby what suite of software development tools to provision for the tasks ahead. The field is exploding right now, with many competing AI frameworks and platforms to choose from.

MATLAB ?

My first instinct was to use MATLAB, not least since the latest version focuses heavily on Machine Learning & Deep Learning (see https://www.mathworks.com/solutions/deep-learning/examples.html ). Moreover, I've used MATLAB extensively over many years, for prototyping as well as generating production code, across a variety of fields. However, I chose not to use MATLAB for present purposes -- and not because it is a legacy, closed-source, platform with commensurate annual subscription fees -- but because it was not obvious how scalable a MATLAB-based solution would be for deployment of the trained model into production. I am confident that MATLAB would be an effective framework for rapid prototyping and training of AI models, but production deployment is a different matter. That said, we have recently procured the necessary MATLAB toolboxes for investigating AI models. I aim to perform some MATLAB-based AI experiments in the near future, and I will report my findings in due course.

GLUON ?

As an extensive user of Amazon Web Services AWS cloud-computing infrastructure for many years, I was enthusiastic about using their recently-announced open-source GLUON library for Deep learning (see https://aws.amazon.com/blogs/aws/introducing-gluon-a-new-library-for-machine-learning-from-aws-and-microsoft/ ). So much so that I attempted the online step-by-step tutorials, but kept getting a python kernel crash right when it mattered most: when trying to run their object-detection sample. In frustration, I abandoned that approach. I would like to try again at some point (presumably once whatever bugs need to be ironed-out have been ironed-out?) because I would welcome being able to remain within the AWS eco-system for AI along with the many other areas where I routinely use AWS. STOP PRESS: when making my decision on choice of AI framework, AWS SageMaker did not exist. It does now. Something to explore in a follow-on project.

TensorFlow

Cutting to the chase, after the GLUON failures, and having abandoned MATLAB for now, I settled upon Google's TensorFlow framework. Not least since TensorFlow seems to be the most widely-used AI framework these days, across all industries, for both prototyping and production deployment of AI models. Moreover, just around the time I was making my decision, Google open-sourced their own object-detection models and API built in TensorFlow (see https://github.com/tensorflow/models/tree/master/research/object_detection ). That clinched it.

Python

The decision to use TensorFlow, de facto led to the corresponding decision to use Python for the necessary software development surrounding the AI models since the two (TensorFlow and Python) go pretty-much hand-in-hand. Although I have been writing software in my profession for multiple decades, I had never used Python until now. So this was interesting: I had committed to embark on becoming sufficiently fluent in a new programming language, Python, in order to be able to use TensorFlow effectively. But I drew significant comfort from the fact that I was not alone: by all accounts, Python has (quite recently) become the industry-standard language for data-analysis, Machine Learning, and Deep Learning. I was confident that someone before me would have faced whatever hurdles I was about to cross, and that there would be some solution or workaround somewhere on Stack Overflow. This turned out to be largely true, thereby validating my choice. So, if you are interested in pursuing software development for Machine Learning and/or Deep Learning, and if you don't yet know Python, first thing to do is learn Python. It is simple to learn, massively supported, and extremely powerful.

Jupyter Notebooks

I had never come across these until now -- but became an instant fan. They make programming in Python very simple. Also, many of the relevant online tutorials are built around accompanying Jupyter Notebooks available via GitHub. In fact, in this entire project, I never needed to write any Python code outside of the Jupyter Notebooks environment.Windows or Linux ?

Having decided upon TensorFlow and Python for the AI development, the decision to use Linux rather than Windows as the base operating system was essentially obvious. Again, this was going against the grain since I have been using the Windows operating system almost exclusively for all my software development environments over the many years to date. However, Google search for TensorFlow and Python quickly reveals that Linux is the platform of choice for these tools. Wanting to minimise future headaches associated with inappropriate choice of operating system, I therefore went with the herd on this: namely, I opted for Linux as the base operating system for training the AI models. There is, however, a footnote to this. When it came to building the deployment and production layers (i.e., after training the AI models), I reverted to Windows (and C#). More on that later.

Cloud-based Virtual Machine for Training

Part of the reason for being somewhat casual about choice of operating system is that I knew from the outset that I would be developing the AI models and software on a virtual machine hosted on the cloud since I had migrated all my software development activities from local "bare metal" to public cloud servers, many years ago. I also knew that the production deployment would be cloud-hosted, alongside the rest of the ParkingRadar back-end software stack.

AWS Deep Learning AMIs

Given my familiarity with AWS, it was a natural choice to deploy an AWS Deep Learning AMI (see https://aws.amazon.com/blogs/ai/get-started-with-deep-learning-using-the-aws-deep-learning-ami/ ) as the base image for my cloud-based virtual machine for training the AI. Specifically, I chose the Ubuntu version (rather than the Amazon Linux), reason being that Ubuntu is widely used within and outwith the AWS universe -- so one could expect there would be more online support with any issues possibly encountered. AWS Deep Learning AMIs come with all the core AI framework software pre-installed including TensorFlow and Python. Moreover, they impart the great advantage that the underlying hardware can be switched seamlessly from low-cost CPU-based EC2 instances (for initial development of the software and models), to more expensive but much for powerful GPU-based EC2 instances for actually training the models. So far, so good for the training environment. But the choice of the appropriate infrastructure / environment for eventually deploying the trained models into production was less obvious (covered later).

Configuring all the Kit

Configuring the (mostly Python / Jupyter Notebooks / TensorFlow) development environment (on the Ubuntu server spawned from the AWS Deep Learning AMI) was relatively straightforward. I just followed the relevant online tutorials. Encountered no significant "gotchas" to speak of. Having installed the core tools, the next step was to install the Google Object Detection API which includes the relevant TensorFlow models. The instructions found in the assorted links here worked without a hitch.Solution Details

Useful Starting Example

"How to train your own Object Detector with TensorFlow's Object Detector API" was a most helpful online resource (with code samples) for gaining rapid familiarity with the API. I used many of the elements presented there, with some necessary modifications, the significant ones of which are presented below.

Creating the Learning Data Set

The Raw Images

The preparation of the training data set raw images was the most time consuming and labour-intensive task of all (from what I've read, this is a common refrain). These are the steps I followed:

- Used the OpenStreetMaps API to auto-capture the coordinates (latitude, longitude) identifying the precise locations of known "P" symbols (since ParkingRadar uses OpenStreetMaps, and the known parking spots are encoded in the underlying meta data)

- Used a screenshot grabbing app to create a 300 x 300 px image file (in '.png' format) of the ParkingRadar OpenStreetMaps map centred on each of the coordinates identified in the previous step. Regarding the screenshot grabbing app: I started by using the open-source Scrapy-Splash framework installed on the Ubuntu machine(see https://github.com/scrapy-plugins/scrapy-splash ). However, it was difficult to properly control the pre-delays (such that the map images got fully-loaded before the captures occurred), and many of the captured images were partially blank, even worse, with the "P" symbols were incomplete (which would have had significant adverse effects on the training). I was unable to find a suitable alternate screenshot-capturing library either in Python or C#, so I opted for a commercial web-service (https://urlbox.io) which comes with both Python and C# (plus Node.js, Ruby, PHP, and Java) sample wrapper code (I used C#, more on that later).

- Visually checked each file to ensure that the "P" symbol was indeed in the centre by adjusting the latitude and coordinates (by hand, very laborious for approximately 1000 images -- the irony wasn't lost on me that this was precisely the task that I was hoping my AI could eventually do!). Then, for each properly-centred file, I computed a random offset (defined in terms of horizontal and vertical pixels) from the centre, and re-took the screenshot centred on these controlled offset coordinates (converted to latitude and longitude via the scale-factors in http://wiki.openstreetmap.org/wiki/Zoom_levels). In this way, the final training images contained "P" symbols in randomly-distributed but wholly-known locations .GOTCHA: in my very early attempts at training the AI, I used only centred (not randomly offset) "P" images. The AI training converged successfully, but the trained model could only detect "P" images that were centred. Perhaps I should have known or anticipated this. Anyway, by introducing the known random offsets, the later attempts were successful in detecting "P" symbols anywhere in the images.

- Created a set of bounding-box coordinates for the "P" symbol in each image, based on the known pixel offsets from the previous step. For object detection, specification of these bounding boxes along with the training images is an essential component in the training of the AI, telling it where the known "P" symbols are located, so it can learn how to identify them. In my application, I had the advantage that the map images could all be defined at a single zoom-factor (I chose "17" in OpenStreetMaps) which meant that the "P" symbols would always be the same size (both in training and when deployed in production). This meant that the bounding boxes could all be defined as squares of a fixed size. I chose 60 x 60 px which happened to enclose the "P" symbol reasonably tightly. Also, I had read that bounding boxes should generally be about 15% of the entire image. So, 60 x 60 px seemed to be about right for my 300 x 300 px image size. For convenience, rather than storing the bounding box coordinates in a separate file, the centre-point of the bounding box for a given box was encoded within the filename. Then when processing the raw files into the format required for feeding to TensorFlow, the bounding box coordinates were computed programmatically based on the centre-points extracted from the filenames, coupled with the specified square size.

The screenshots below show a couple examples of the finally-prepared training images. Each image is 300 x 300 px. The accompanying bounding boxes (not shown) measure 60 x 60 px, centred on the "P" symbol in each image. Note that there is only one "P" symbol in each training image, located in a randomly select offset position from centre. The final data set comprised 1083 such files of which 900 were used for training the model, and the remaining 183 were used for validation / testing.

The TensorFlow TFRecord

TensorFlow doesn't consume the individual raw image files for training. Instead, the entire set of image files (and corresponding bounding boxes etc) need to be collated into a single entity called a TFRecord, which is then passed to the object detection model for training. Unfortunately the formal Google documentation is somewhat weak here -- especially in terms of providing worked examples. Moreover, the code is quite brittle, i.e., it is easy to get it wrong. Thankfully, however, those that have come before have shown the way. Based on the sample code in https://github.com/datitran/raccoon_dataset/blob/master/generate_tfrecord.py , here's my fully-working Python code for preparing the required TFRecord from the raw images described above. Note: a separate TFRecord is generated for the training image set and for the validation image set, respectively (commented/uncommented in code chunks as appropriate):

import os

import io

import tensorflow as tf

import io

import tensorflow as tf

from object_detection.utils import dataset_util

flags = tf.app.flags

#flags.DEFINE_string('output_path', '', 'Path to output TFRecord')

#for use inside notebook, set the output dir explicitly since not a #command-line arg

#for use inside notebook, set the output dir explicitly since not a #command-line arg

#Set differently for training vs validation

#TRAINING SET

flags.DEFINE_string('output_path', '/risklogical/DeeplearningImages/TFRecords/train_PR_JustPOffsetV2.records', 'Path to output TFRecord')

#TRAINING SET

flags.DEFINE_string('output_path', '/risklogical/DeeplearningImages/TFRecords/train_PR_JustPOffsetV2.records', 'Path to output TFRecord')

#VALIDATION SET

#flags.DEFINE_string('output_path', '/risklogical/DeeplearningImages/TFRecords/validate_PR_JustPOffsetV2.records', 'Path to output TFRecord')

#flags.DEFINE_string('output_path', '/risklogical/DeeplearningImages/TFRecords/validate_PR_JustPOffsetV2.records', 'Path to output TFRecord')

FLAGS = flags.FLAGS

'''

where it should be obvious that the paths /risklogical/DeeplearningImages/TFRecords/train_PR_JustPOffsetV2.records and /risklogical/DeeplearningImages/TFRecords/validate_PR_JustPOffsetV2.records should be substituted with your own paths and filenames.

'''

def create_tf_fromfile(imageFile, boundingboxsize):

# TODO(user): Populate the following variables from your example.

# See https://github.com/datitran/raccoon_dataset/blob/master/generate_tfrecord.py

filename=imageFile.encode('utf8') # Filename of the image. Empty if image is not from file

#image_decoded = tf.image.decode_image(image_string)

with tf.gfile.GFile(filename, 'rb') as fid:

encoded_png = fid.read()

encoded_png_io = io.BytesIO(encoded_png)

image=Image.open(encoded_png_io)

width, height = image.size

#Get offsets from filename

file_=os.path.basename(imageFile)

pieces=file_.split('_')

#latStr=pieces[1]+'.'+pieces[2]

#lonStr=pieces[4]+'.'+pieces[5]

pxStr=pieces[7]

pyStr=pieces[9].replace('.png','')

image_format = b'png'

xmins = [] #normalized left x coords for bounding box

xmaxs = [] #normalized right x coords for bounding box ymins = [] #normalized top y coords for bounding box

ymaxs = [] #normalized bottom y coords for bounding box

classes_text = [] #string class name of bounding box

classes = [] #integer class id of bounding box

name="PARKING_NORMAL"

#square bounding box centred on image of side

'''

Programmatically define the bounding box as a square of side-length boundingboxsize whose location is offset from the image centre by (pxStr,pyStr) where these are known pre-defined quantities (see discussion above), which, for convenience, had been encoded in each respective raw filename, and extracted (see code a few lines above)

'''

xmin=((width-boundingboxsize)/2)+int(pxStr)ymin=((height-boundingboxsize)/2)+int(pyStr)

xmax=((width+boundingboxsize)/2)+int(pxStr)

ymax=((height+boundingboxsize)/2)+int(pyStr)

classes_text.append(name.encode('utf8'))

classes.append(1) #only one

'''

Since I'm only looking for one class of objects to detect, namely the "P" symbols, only need to define one class for the TensorFlow object detector. Give it the (arbitrary) label PARKING_NORMAL

'''

xmins.append(float(xmin)/width) #normalised

xmaxs.append(float(xmax)/width) #normalised

ymins.append(float(ymin)/height) #normalised

ymaxs.append(float(ymax)/height) #normalised

tf_example = tf.train.Example(features=tf.train.Features(feature={

'image/height': dataset_util.int64_feature(height),

'image/width': dataset_util.int64_feature(width),

'image/filename': dataset_util.bytes_feature(filename),

'image/source_id': dataset_util.bytes_feature(filename),

'image/encoded': dataset_util.bytes_feature(encoded_png),

'image/format': dataset_util.bytes_feature(image_format),

'image/object/bbox/xmin': dataset_util.float_list_feature(xmins),

'image/object/bbox/xmax': dataset_util.float_list_feature(xmaxs),

'image/object/bbox/ymin': dataset_util.float_list_feature(ymins),

'image/object/bbox/ymax': dataset_util.float_list_feature(ymaxs),

'image/object/class/text': dataset_util.bytes_list_feature(classes_text),

'image/object/class/label': dataset_util.int64_list_feature(classes),

}))

return tf_example

import os

from PIL import Image

imageFolder="/risklogical/DeeplearningImages/JustPRandomReGrabbed"

from PIL import Image

imageFolder="/risklogical/DeeplearningImages/JustPRandomReGrabbed"

'''

This points to the folder containing the raw images described earlier. Obviously you would substitute for your own image folder

'''

def main(_):

writer = tf.python_io.TFRecordWriter(FLAGS.output_path)

count=0

actual_count=0

mincount=0 # training

maxcount=900

writer = tf.python_io.TFRecordWriter(FLAGS.output_path)

count=0

actual_count=0

mincount=0 # training

maxcount=900

#mincount=901 # validation

#maxcount=1083

for root, dirs, files in os.walk(imageFolder):

for file_ in files:

if (count>=mincount and count<=maxcount):

if os.path.getsize(os.path.join(root, file_)) > 5000: #only include files bigger than this otherwise may not be fully rendered

tf_example=create_tf_fromfile(os.path.join(root, file_),60)# boxsize 60 px for OSM Zoom of 17, images 300X300

writer.write(tf_example.SerializeToString())

actual_count=actual_count+1

print(file_)

count=count+1

writer.close()

output_path = FLAGS.output_path #os.path.join(os.getcwd(), FLAGS.output_path)

print('Successfully created the TFRecords: %s from %s files'%(output_path,str(actual_count)))

if __name__ == '__main__':

tf.app.run()

#maxcount=1083

for root, dirs, files in os.walk(imageFolder):

for file_ in files:

if (count>=mincount and count<=maxcount):

if os.path.getsize(os.path.join(root, file_)) > 5000: #only include files bigger than this otherwise may not be fully rendered

tf_example=create_tf_fromfile(os.path.join(root, file_),60)# boxsize 60 px for OSM Zoom of 17, images 300X300

writer.write(tf_example.SerializeToString())

actual_count=actual_count+1

print(file_)

count=count+1

writer.close()

output_path = FLAGS.output_path #os.path.join(os.getcwd(), FLAGS.output_path)

print('Successfully created the TFRecords: %s from %s files'%(output_path,str(actual_count)))

if __name__ == '__main__':

tf.app.run()

The TensorFlow Object Detection Model

The Google Object Detection API includes a variety of different pre-trained model architectures. Since my requirements emphasised accuracy over speed (given that the ultimate intention was to deploy the production model on a batch scheduler rather than for real-time individual detection, more on that later), I opted for the Faster RCNN with Inception Resnet v2 trained on the COCO dataset. This was certainly not a scientifically informed choice: I simply read the background information on each model, and concluded that this ought to be suitable for my purposes. I was led by the notion that Google have been doing this for a long time and that their models are well-proven. It did not seem sensible for me to try and re-invent the wheel by attempting to define a brand new object detection neural network from scratch. By contrast, I took the approach (adopted by others before me) of starting with a pre-trained model, then training it further on my own images, in the hope that it would adapt and learn to detect my objects beyond those from its underlying dataset (COCO in this case). This turned out to be true.Training the Object Detection Model

The Configuration File

The TensorFlow training process is controlled via a configuration file. This can be taken "as is" from the Google Object Detection API distribution, making only very minor adjustments. Here is the configuration file (faster_rcnn.config), with my minor adjustments in bold italics

# Faster R-CNN with Inception Resnet v2, Atrous version;

# Configured for MSCOCO Dataset.

# Users should configure the fine_tune_checkpoint field in the train config as

# well as the label_map_path and input_path fields in the train_input_reader and

# eval_input_reader. Search for "PATH_TO_BE_CONFIGURED" to find the fields that

# should be configured.

# Configured for MSCOCO Dataset.

# Users should configure the fine_tune_checkpoint field in the train config as

# well as the label_map_path and input_path fields in the train_input_reader and

# eval_input_reader. Search for "PATH_TO_BE_CONFIGURED" to find the fields that

# should be configured.

model {

faster_rcnn {

num_classes: 1

image_resizer {

keep_aspect_ratio_resizer {

min_dimension: 300

max_dimension: 300

}

}

feature_extractor {

type: 'faster_rcnn_inception_resnet_v2'

first_stage_features_stride: 8

}

first_stage_anchor_generator {

grid_anchor_generator {

scales: [0.25, 0.5, 1.0, 2.0]

aspect_ratios: [0.5, 1.0, 2.0]

height_stride: 8

width_stride: 8

}

}

first_stage_atrous_rate: 2

first_stage_box_predictor_conv_hyperparams {

op: CONV

regularizer {

l2_regularizer {

weight: 0.0

}

}

initializer {

truncated_normal_initializer {

stddev: 0.01

}

}

}

first_stage_nms_score_threshold: 0.0

first_stage_nms_iou_threshold: 0.7

first_stage_max_proposals: 300

first_stage_localization_loss_weight: 2.0

first_stage_objectness_loss_weight: 1.0

initial_crop_size: 17

maxpool_kernel_size: 1

maxpool_stride: 1

second_stage_box_predictor {

mask_rcnn_box_predictor {

use_dropout: false

dropout_keep_probability: 1.0

fc_hyperparams {

op: FC

regularizer {

l2_regularizer {

weight: 0.0

}

}

initializer {

variance_scaling_initializer {

factor: 1.0

uniform: true

mode: FAN_AVG

}

}

}

}

}

second_stage_post_processing {

batch_non_max_suppression {

score_threshold: 0.0

iou_threshold: 0.6

max_detections_per_class: 100

max_total_detections: 100

}

score_converter: SOFTMAX

}

second_stage_localization_loss_weight: 2.0

second_stage_classification_loss_weight: 1.0

}

}

faster_rcnn {

num_classes: 1

image_resizer {

keep_aspect_ratio_resizer {

min_dimension: 300

max_dimension: 300

}

}

feature_extractor {

type: 'faster_rcnn_inception_resnet_v2'

first_stage_features_stride: 8

}

first_stage_anchor_generator {

grid_anchor_generator {

scales: [0.25, 0.5, 1.0, 2.0]

aspect_ratios: [0.5, 1.0, 2.0]

height_stride: 8

width_stride: 8

}

}

first_stage_atrous_rate: 2

first_stage_box_predictor_conv_hyperparams {

op: CONV

regularizer {

l2_regularizer {

weight: 0.0

}

}

initializer {

truncated_normal_initializer {

stddev: 0.01

}

}

}

first_stage_nms_score_threshold: 0.0

first_stage_nms_iou_threshold: 0.7

first_stage_max_proposals: 300

first_stage_localization_loss_weight: 2.0

first_stage_objectness_loss_weight: 1.0

initial_crop_size: 17

maxpool_kernel_size: 1

maxpool_stride: 1

second_stage_box_predictor {

mask_rcnn_box_predictor {

use_dropout: false

dropout_keep_probability: 1.0

fc_hyperparams {

op: FC

regularizer {

l2_regularizer {

weight: 0.0

}

}

initializer {

variance_scaling_initializer {

factor: 1.0

uniform: true

mode: FAN_AVG

}

}

}

}

}

second_stage_post_processing {

batch_non_max_suppression {

score_threshold: 0.0

iou_threshold: 0.6

max_detections_per_class: 100

max_total_detections: 100

}

score_converter: SOFTMAX

}

second_stage_localization_loss_weight: 2.0

second_stage_classification_loss_weight: 1.0

}

}

train_config: {

batch_size: 1

optimizer {

momentum_optimizer: {

learning_rate: {

manual_step_learning_rate {

initial_learning_rate: 0.0003

schedule {

step: 0

learning_rate: .0003

}

schedule {

step: 900000

learning_rate: .00003

}

schedule {

step: 1200000

learning_rate: .000003

}

}

}

momentum_optimizer_value: 0.9

}

use_moving_average: false

}

gradient_clipping_by_norm: 10.0

fine_tune_checkpoint: "/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/model.ckpt"

from_detection_checkpoint: true

# Note: The below line limits the training process to 200K steps, which we

# empirically found to be sufficient enough to train the pets dataset. This

# effectively bypasses the learning rate schedule (the learning rate will

# never decay). Remove the below line to train indefinitely.

num_steps: 200000

data_augmentation_options {

random_horizontal_flip {

}

}

}

batch_size: 1

optimizer {

momentum_optimizer: {

learning_rate: {

manual_step_learning_rate {

initial_learning_rate: 0.0003

schedule {

step: 0

learning_rate: .0003

}

schedule {

step: 900000

learning_rate: .00003

}

schedule {

step: 1200000

learning_rate: .000003

}

}

}

momentum_optimizer_value: 0.9

}

use_moving_average: false

}

gradient_clipping_by_norm: 10.0

fine_tune_checkpoint: "/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/model.ckpt"

from_detection_checkpoint: true

# Note: The below line limits the training process to 200K steps, which we

# empirically found to be sufficient enough to train the pets dataset. This

# effectively bypasses the learning rate schedule (the learning rate will

# never decay). Remove the below line to train indefinitely.

num_steps: 200000

data_augmentation_options {

random_horizontal_flip {

}

}

}

train_input_reader: {

tf_record_input_reader {

input_path: "/risklogical/DeeplearningImages/TFRecords/train_PR.records"

}

label_map_path: "/risklogical/DeeplearningImages/TFRecords/label_map.pbtxt"

}

tf_record_input_reader {

input_path: "/risklogical/DeeplearningImages/TFRecords/train_PR.records"

}

label_map_path: "/risklogical/DeeplearningImages/TFRecords/label_map.pbtxt"

}

eval_config: {

num_examples: 183

# Note: The below line limits the evaluation process to 10 evaluations.

# Remove the below line to evaluate indefinitely.

max_evals: 10000

}

num_examples: 183

# Note: The below line limits the evaluation process to 10 evaluations.

# Remove the below line to evaluate indefinitely.

max_evals: 10000

}

eval_input_reader: {

tf_record_input_reader {

input_path: "/risklogical/DeeplearningImages/TFRecords/validate_PR.records"

}

label_map_path: "/risklogical/DeeplearningImages/TFRecords/label_map.pbtxt"

shuffle: false

num_readers: 1

num_epochs: 1

}

tf_record_input_reader {

input_path: "/risklogical/DeeplearningImages/TFRecords/validate_PR.records"

}

label_map_path: "/risklogical/DeeplearningImages/TFRecords/label_map.pbtxt"

shuffle: false

num_readers: 1

num_epochs: 1

}

The minor modifications are summarised as follows:

image_resizer {

keep_aspect_ratio_resizer {

min_dimension: 300

max_dimension: 300

}

I set these to the raw dimensions of my training images, namely 300 x 300 (pixels) on the assumption that by doing so, there would be no need for any re-sizing and hence no associated distortion. I must admit, though, I am not sure of the validity of this reasoning.

fine_tune_checkpoint: "/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/model.ckpt"

This specifies the frozen state of the pre-trained model (in the TensorFlow '.ckpt' format) from which the new training commences. The path is simply the location where the model.ckpt file is located and should obviously be substituted by your own path. The actual model.ckpt file in question was taken directly from the Google Object Detection API " as is".

train_input_reader:{

tf_record_input_reader {

input_path: "/risklogical/DeeplearningImages/TFRecords/train_PR.records"

}

input_path: "/risklogical/DeeplearningImages/TFRecords/train_PR.records"

}

label_map_path: "/risklogical/DeeplearningImages/TFRecords/label_map.pbtxt"

}

}

The label_map.pbtxt specifies a file which contains the object class labels, and should obviously be substituted by your own path. In my case, there is only one object class (corresponding to the "P" symbol object to be detected). The contents of the file is precisely as follows:

item {

id: 1

name: 'normal_parking'

}

Finally,

eval_input_reader: {

tf_record_input_reader {

input_path: "/risklogical/DeeplearningImages/TFRecords/validate_PR.records"

}

label_map_path: "/risklogical/DeeplearningImages/TFRecords/label_map.pbtxt"

shuffle: false

num_readers: 1

num_epochs: 1

}

tf_record_input_reader {

input_path: "/risklogical/DeeplearningImages/TFRecords/validate_PR.records"

}

label_map_path: "/risklogical/DeeplearningImages/TFRecords/label_map.pbtxt"

shuffle: false

num_readers: 1

num_epochs: 1

}

The label_map.pbtxt is exactly the same as above.

Executing the Training Run

This is most easily achieved by running the Python function named train.py which is distributed with the Google Object Detection API . The function can be called from the Linux (Ubuntu) command-line, specifying the necessary parameters. To ensure correct paths, open a command-line within theTensorFlow/models-master/research folder of the API installation location, and execute the following command:

python object_detection/train.py

--logtostderr

--pipeline_config_path=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/faster_rcnn.config

--train_dir=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/train/

where the parameters are as follows:

--pipeline_config_path=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/faster_rcnn.config

which points to the Configuration File described in the previous section. Obviously this should be substituted with your own path.

--train_dir=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/train/

which specifies the destination for the outputs (e.g., updated model.ckpt snapshots, etc) generated during the training process. Obviously this should be substituted with your own path.

When successfully initiated, the console should display the training stepwise progress, as shown in the screenshot below:

For this particular model which has a high degree of complexity, training progress is rather slow on a standard CPU-based machine such as the AWS EC2 c4.large instance type I typically use when developing software. Switching to a GPU-based machine, such as the AWS EC2 g3.4xlarge instance type yields approximately 100 times the performance on this model, for only 10 times the cost, so is certainly cost-effective for performing the actual training runs, as demonstrated in the screenshot above (where a training step is seen to take less than 1 second on the AWS EC2 g3.4xlarge instance type).

Whilst this shows that the training is indeed progressing, it is not very informative. For more detailed information, the accompanying TensorBoard browser app can be invoked.

Using TensorBoard for Monitoring Training Progress

Issuing the following command in the Ubuntu console

tensorboard --logdir=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/

with the logdir parameter pointing to the folder containing the model-under-training,

instigates an instance of TensorBoard attached to the running training job. By pointing a browser (on the same Ubuntu machine) to

http://ip-********:6006

(putting in your own IP address in place of ********) opens the TensorBoard dashboard, illustrated in the screenshot below:

To enable TensorBoard to also monitor the validation tests against the current state of the model-under-training, execute the eval.py Python command in the Ubuntu console:

python object_detection/eval.py --logtostderr --pipeline_config_path=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/faster_rcnn.config --checkpoint_dir=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/train/ --eval_dir=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/eval/

where the analogous set of parameters are almost the same as for the train.py command, and their meanings by now should be (almost) self-explanatory. GOTCHA: on occasion, this command will fail to run (generating a "CUDA_OUT_OF_MEMORY" and/or "Resource exhausted: OOM" error before terminating). The workaround is to kill all Jupyter Notebooks currently running on the server, then try again. Once successfully executed, enable eval (in the left-hand panel of TensorBoard) navigate to the IMAGES tab on TensorBoard, and you should see the results of the ongoing validation tests i.e., the validation images with the corresponding bound-boxes from the detection trials. Below are some screenshots of such, demonstrating successful detection.

Ad Hoc Testing of the Trained Model Before Production Deployment

The validation images available via TensorBoard presented above demonstrate that the training has been successful and the trained model can effectively detect the "P" symbols. Before proceeding to the next phase of deploying the model to production, it is useful to present an ad hoc method for testing the trained model on an arbitrary input image, i.e., rather than just the validation set processed via the eval.py method.

The first step is to export the trained model in the format which can accept a single image as an input. The export_inference_graph.py Python method included with the Google Object Detection API does this, and can be called from the Ubuntu console as follows:

python object_detection/export_inference_graph.py

--input_type image_tensor

--pipeline_config_path=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/faster_rcnn.config

--trained_checkpoint_prefix=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/train/model.ckpt-46066

--output_directory /risklogical/DeeplearningImages/Outputs/PR_Detector_JustP_RCNN_ForJupyter

where the paths and filenames are obviously substituted with your own. GOTCHA: in the above code snippet, it is important to specify

--input_type image_tensor

since this enables single images to be presented to the model via Python code.

Successful execution of this method creates a 'frozen' model contained in the file named

frozen_inference_graph.pb

located in the specified output folder

/risklogical/DeeplearningImages/Outputs/PR_Detector_JustP_RCNN_ForJupyter/

This 'frozen model' can then be imported and run against an arbitrary test image in an ad hoc manner. Here is the Python code which performs such ad hoc tests.

import numpy as np

import os

import six.moves.urllib as urllib

import sys

import tarfile

import tensorflow as tf

import zipfile

from collections import defaultdict

from io import StringIO

from matplotlib import pyplot as plt

from PIL import Image

# This is needed to display the images.

%matplotlib inline

# This is needed since need modules from the object_detection folder.

sys.path.append("/home/ubuntu/workplace/TensorFlow/models-master/research/object_detection")

from utils import label_map_util

from utils import visualization_utils as vis_util

# Path to frozen detection graph. This is the actual model that is used for the object detection.

PATH_TO_CKPT ='/risklogical/DeeplearningImages/Outputs/PR_Detector_JustP_RCNN_ForJupyter/frozen_inference_graph.pb'

labels_folder='/risklogical/DeeplearningImages/TFRecords'

# List of the strings that is used to add correct label for each box.

PATH_TO_LABELS = os.path.join(labels_folder, 'label_map.pbtxt')

NUM_CLASSES = 1

detection_graph = tf.Graph()

with detection_graph.as_default():

od_graph_def = tf.GraphDef()

with tf.gfile.GFile(PATH_TO_CKPT, 'rb') as fid:

serialized_graph = fid.read()

od_graph_def.ParseFromString(serialized_graph)

tf.import_graph_def(od_graph_def, name='')

The first step is to export the trained model in the format which can accept a single image as an input. The export_inference_graph.py Python method included with the Google Object Detection API does this, and can be called from the Ubuntu console as follows:

python object_detection/export_inference_graph.py

--input_type image_tensor

--pipeline_config_path=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/faster_rcnn.config

--trained_checkpoint_prefix=/risklogical/DeeplearningImages/models/faster_rcnn_inception_resnet_v2_atrous_coco_11_06_2017/train/model.ckpt-46066

--output_directory /risklogical/DeeplearningImages/Outputs/PR_Detector_JustP_RCNN_ForJupyter

where the paths and filenames are obviously substituted with your own. GOTCHA: in the above code snippet, it is important to specify

--input_type image_tensor

since this enables single images to be presented to the model via Python code.

Successful execution of this method creates a 'frozen' model contained in the file named

frozen_inference_graph.pb

located in the specified output folder

/risklogical/DeeplearningImages/Outputs/PR_Detector_JustP_RCNN_ForJupyter/

This 'frozen model' can then be imported and run against an arbitrary test image in an ad hoc manner. Here is the Python code which performs such ad hoc tests.

import numpy as np

import os

import six.moves.urllib as urllib

import sys

import tarfile

import tensorflow as tf

import zipfile

from collections import defaultdict

from io import StringIO

from matplotlib import pyplot as plt

from PIL import Image

# This is needed to display the images.

%matplotlib inline

# This is needed since need modules from the object_detection folder.

sys.path.append("/home/ubuntu/workplace/TensorFlow/models-master/research/object_detection")

from utils import label_map_util

from utils import visualization_utils as vis_util

# Path to frozen detection graph. This is the actual model that is used for the object detection.

PATH_TO_CKPT ='/risklogical/DeeplearningImages/Outputs/PR_Detector_JustP_RCNN_ForJupyter/frozen_inference_graph.pb'

labels_folder='/risklogical/DeeplearningImages/TFRecords'

# List of the strings that is used to add correct label for each box.

PATH_TO_LABELS = os.path.join(labels_folder, 'label_map.pbtxt')

NUM_CLASSES = 1

detection_graph = tf.Graph()

with detection_graph.as_default():

od_graph_def = tf.GraphDef()

with tf.gfile.GFile(PATH_TO_CKPT, 'rb') as fid:

serialized_graph = fid.read()

od_graph_def.ParseFromString(serialized_graph)

tf.import_graph_def(od_graph_def, name='')

#load label map

label_map = label_map_util.load_labelmap(PATH_TO_LABELS)

categories = label_map_util.convert_label_map_to_categories(label_map, max_num_classes=NUM_CLASSES, use_display_name=True)

category_index = label_map_util.create_category_index(categories)

label_map = label_map_util.load_labelmap(PATH_TO_LABELS)

categories = label_map_util.convert_label_map_to_categories(label_map, max_num_classes=NUM_CLASSES, use_display_name=True)

category_index = label_map_util.create_category_index(categories)

import io

import matplotlib.image as mpimg

PATH_TO_TEST_IMAGES_DIR = '/risklogical/DeeplearningImages/ManualTestImagesPR/TestForJustP'

TEST_IMAGE_PATHS = []

for root, dirs, files in os.walk(PATH_TO_TEST_IMAGES_DIR):

for file_ in files:

TEST_IMAGE_PATHS.append(os.path.join(root, file_))

# Size, in inches, of the output images.

IMAGE_SIZE = (12, 8)

with detection_graph.as_default():

with tf.Session(graph=detection_graph) as sess:

for image_path in TEST_IMAGE_PATHS:

image = Image.open(image_path)

image_np= np.array(image)

if image_np.shape[2]==4: # got a 4th column e.g. alpha channel

imr=image_np[:,:,:-1] #remove 4th column

image_np=imr

# Expand dimensions since the model expects images to have shape: [1, None, None, 3]

image_np_expanded = np.expand_dims(image_np, axis=0)

image_tensor = detection_graph.get_tensor_by_name('image_tensor:0')

# Each box represents a part of the image where a particular object was detected.

boxes = detection_graph.get_tensor_by_name('detection_boxes:0')

# Each score represent how level of confidence for each of the objects.

# Score is shown on the result image, together with the class label.

scores = detection_graph.get_tensor_by_name('detection_scores:0')

classes = detection_graph.get_tensor_by_name('detection_classes:0')

num_detections = detection_graph.get_tensor_by_name('num_detections:0')

# Actual detection.

(boxes, scores, classes, num_detections) = sess.run(

[boxes, scores, classes, num_detections],

feed_dict={image_tensor: image_np_expanded})

# Visualization of the results of a detection.

vis_util.visualize_boxes_and_labels_on_image_array(

image_np,

np.squeeze(boxes),

np.squeeze(classes).astype(np.int32),

np.squeeze(scores),

category_index,

use_normalized_coordinates=True,

line_thickness=8)

plt.figure(figsize=IMAGE_SIZE)

plt.imshow(image_np)

GOTCHA: in the above code snippet it is essential to perform the re-shaping of the image data depending on whether it contains an alpha channel or not, otherwise it will fail to execute

if image_np.shape[2]==4: # got a 4th column e.g. alpha channel

imr=image_np[:,:,:-1] #remove 4th column

image_np=imr

# Expand dimensions since the model expects images to have shape: [1, None, None, 3]

image_np_expanded = np.expand_dims(image_np, axis=0)

Example output generated by running this ad hoc test script is shown in the screenshot below. The resulting display is analogous to those generated via TensorBoard, but with the advantage of flexibility in that the model can be run in an ad hoc manner against any test image files contained in the folder

PATH_TO_TEST_IMAGES_DIR = '/risklogical/DeeplearningImages/ManualTestImagesPR/TestForJustP'

without having to create a TFRecord from a collection of files and without having to evoke TensorBoard (obviously you would substitute with your own path and contents).

This concludes Steps 1 & 2 of the overall Solution Plan presented at the start of this post, namely the creation of a set of suitable training images, then the successful completion of the training of an object detection Deep Learning model for identifying the location of "P" symbols in ParkingRadar map screenshots. The final step is the deployment of this trained model into production. This is the topic covered in the follow-on post, where I'll also present my conclusions on the overall project.

FINAL GOTCHA: since the AI was trained with only a single P per bounding box, it is unable to detect when multiple P's are closer together than the dimension of the 60 px bounding box. It can however detect multiple "P"s in a given image, as long as each is separated by 60 px or more.